Active Objects, Surfaces and Zones

Exploring the potential of luminous surfaces, interactive controls, and digital content for retail applications: 25+ years ago!

By Brad Koerner

Let’s jump straightaway to the conclusion, shall we? The very last paragraph of my thesis, written way back in the fall of 2000 when I was just a wee youngster:

To introduce interactive narratives into the built environment, designers must create a new language of dynamic elements capable of sensing and responding to a space’s inhabitants. These dynamic surfaces, objects and zones will dramatically alter traditional notions of architectural space, creating new conduits between the physical and virtual worlds. Designers will need to consider their environments as conceptual interfaces, defining those environments as specific places through the introduction of particular narratives. These spaces will have a living presence, with their dynamic fusion of light and the human body blending two seemingly disparate worlds together and reinvigorating the significance of our tangible experiences.

So how exactly did I reach such an incredible forward-thinking conclusion, way back in the fall semester of 2000, as I completed my Master of Architecture degree at Harvard’s Graduate School of Design? This was at a time that most people barely even knew the Internet existed and if they were indeed progressive enough to actually be using the Internet, they connected via dial-up modems on software delivered by 3.5″ floppies.

For the first time I will dig into my archives and publish my research online, much of which I believe is still highly relevant with today’s hype around the Internet of Things and omnichannel convergence. Many of the fundamental concepts I explored in active sensing and luminous surfaces may help those of you working on IoT, embedded lighting, or modern retail design.

First, the slideshow of my final thesis document:

For those of you romantics out there, if you want to find the actual printed version (complete with included CD-ROM!) you can find it here in Harvard’s library system. Or if you’re more of person of the 21st century, download the PDF version here:

I won’t repeat the content in the thesis document – but let’s explore the context, content and relevance of the research. Here goes….

Part I: Preface – The Story of My Thesis

I earned two degrees in architectural design: So how exactly did I wind up researching interactive retail lighting controls for my final design thesis?

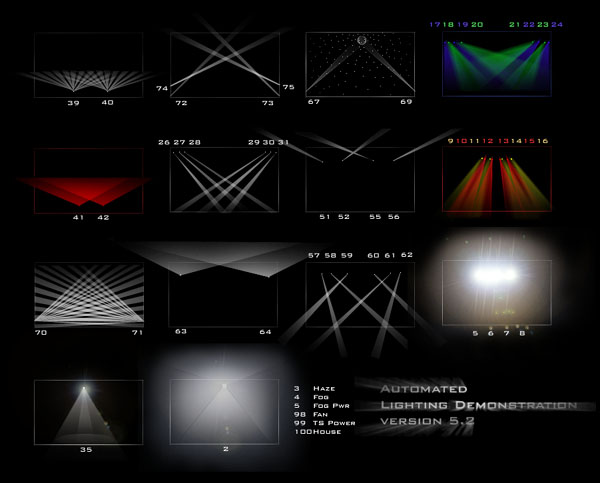

During my undergraduate program at University of Virgina’s School of Architecture, I became fascinated with theatrical lighting. I took several courses on theatrical lighting design with professor Lee Kennedy at UVa’s School of Drama. Most of the theatrical lighting technologies at the time were quite advanced and basically unheard of in architectural design. Lee’s enthusiasm for the art and science of lighting opened my eyes to a mix of technologies that I realized would clearly impact the world of architectural design.

When I attended Harvard University’s Graduate School of Design, I continued to explore advanced concepts in architectural lighting, fusing technologies from the theatrical world into the architectural world. Instead of the typical final thesis in which I would design a fantasy building, I opted to explore advanced lighting technologies and how they would impact the future of architecture. Michelle Addington, who is now dean of The University of Texas at Austin School of Architecture, was my thesis adviser (and her perspective on the intersection between advanced tech and progressive design concepts was simply amazing – Michelle is really who drove me into the future).

You need to understand how limited architectural lighting controls were at that time. To set the historical context a bit: My top-of-the-line PC in 1998 ran Windows NT on a 800mhz processor with 128mb of RAM (that’s 128 megabytes…NOT gigabytes!) Digital cameras were expensive, poor-quality novelties (so I had to shoot on Kodachrome and scan the slides). Steve Jobs just released the original iMac, which made a big splash by ditching the 3.5″ floppy disk drive, but promised to make it easier for average folks to connect to the internet…by modem, of course! The World Wide Web was only about 5 years old at this point and in the midst of the infamous dot-com bubble, but early sites like Amazon.com or Outpost.com clearly showed the immense promise of e-commerce. Architectural lighting had only very limited, strictly proprietary control systems, all based on turning individual dimmers on or off, or somewhere in between.

Part II: A confluence of technologies…

Around 1999, several nascent technologies emerged that together, would lead to a profound change in architectural lighting design.

Digitally Controlled Lighting

By 1999, the DMX control standard along with robotic automated lighting fixtures had already radically changed entertainment lighting. Some of this spilled over into the architectural world, a category that came to be known as “architainment” lighting. Color Kinetics was born in 1997 when robotics engineers from Carnegie Mellon combined RGB LEDs with PMW and digital controls. With the first “solid state” digitally controlled lighting fixture, the promise of no maintenance finally made advanced lighting more suitable to permanent installations.

Networking and the “Internet of Things” in 1998

A couple early networked lighting technologies launched around this time, like ETC’s Unison system or Strand’s system (such as the ParkNet installation at Islands of Adventure), which incorporated IP networking to push DMX over long distances. It was clearly obvious that the potential existed to push digital control and digital content throughout a large building or even urban-scaled project.

Lee Kennedy at UVA experimented with a Rosco Horizon controller, an early theatrical DMX control system that replaced the typical lighting console with a PC-based application. One aspect of the Horizon was especially unique: The Horizon software provided an interface for HTML triggering of its channels and cues. Lee built HTML “magic sheets” that gave him a graphical, web-based control of his lighting system. The “Internet of Things” long before the concept even existed!

Light as a Material

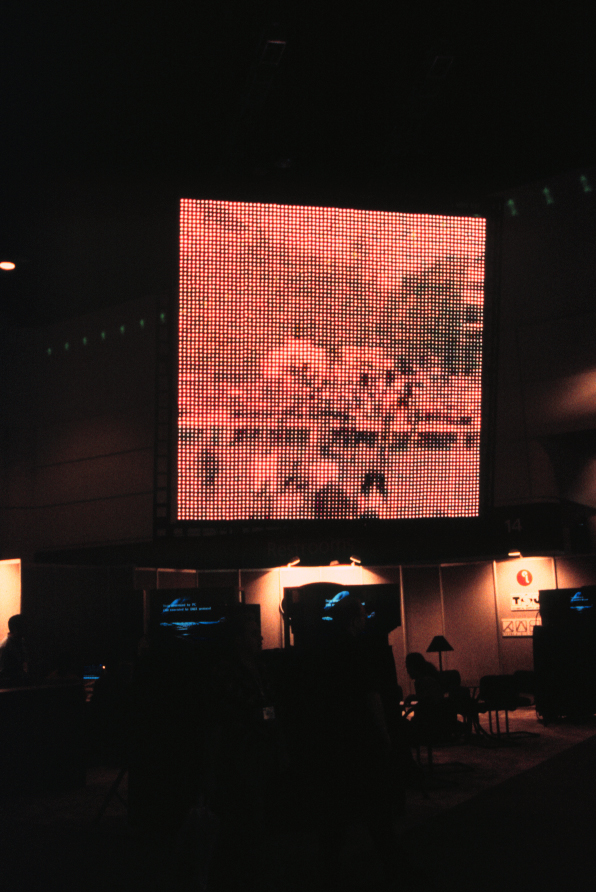

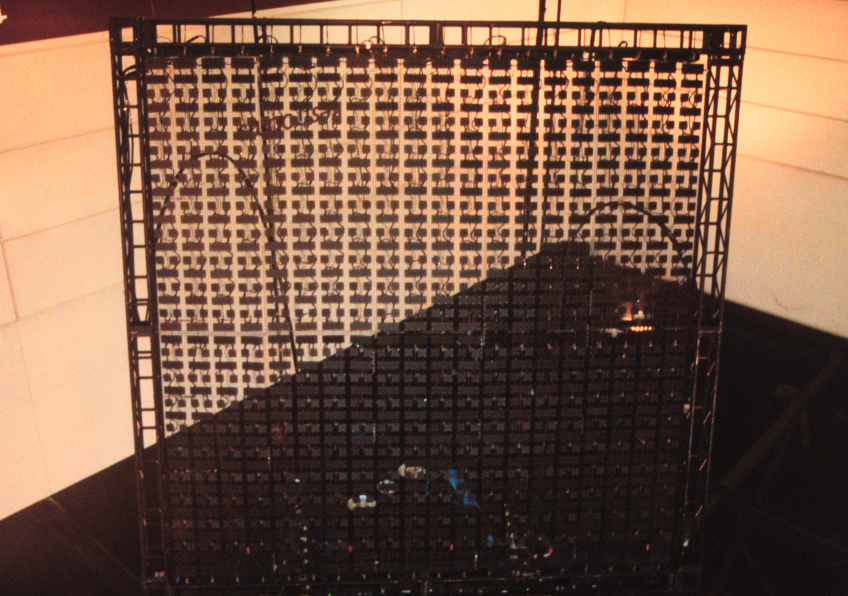

LED’s for architectural use were just birthing onto the market. Blue LED’s only became “practical” light sources around 1997-1998; two of the first big examples of their implementation was the massive LED screen used in U2’s Popmart concert tour in 1997 and the original curving NASDAQ sign in Times Square in 1999. LED screen technology held great promise at that time, even given the embryonic level of the technology. I attended the LDI conference for theatrical lighting technologies either 1997 or 1998; at that conference was an early LED screen (I can’t remember the manufacturer who made it) that consisted of printed circuit boards crudely connected by flexible wires, allowing the screen to be rolled up for transport.

Once I saw that screen, plus the architectural scale of the U2 stage design, the future of architectural lighting was obvious to me: Lighting will become a material, one filled with digital content. It was just a matter of time.

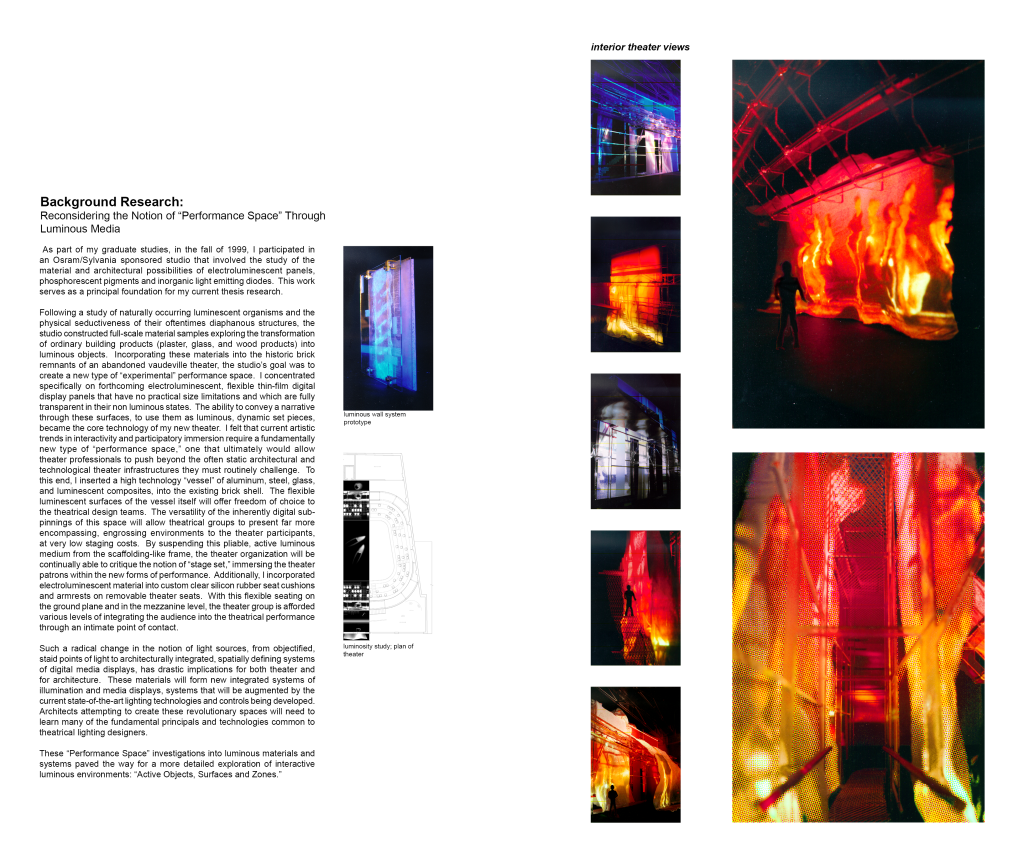

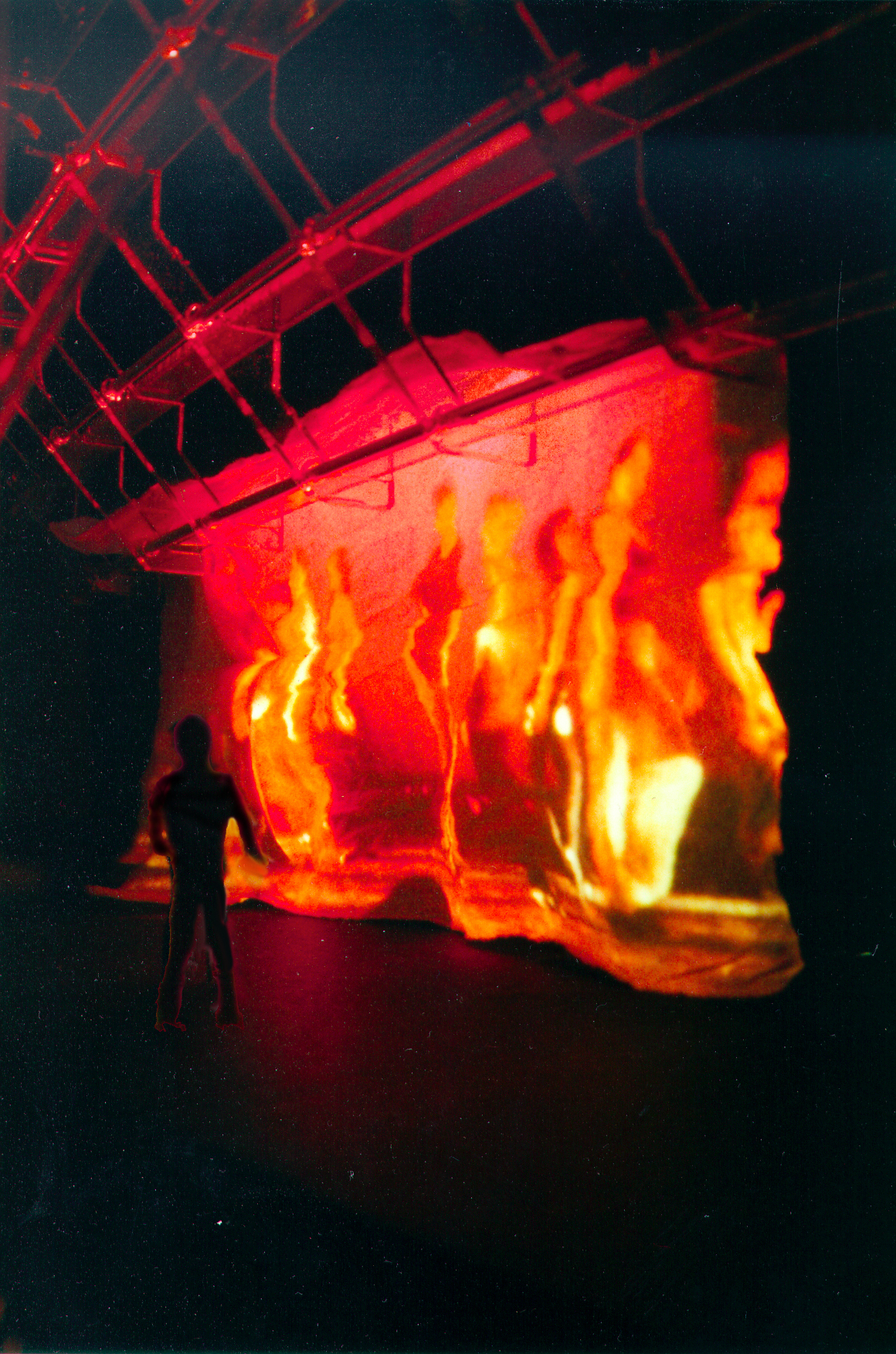

And in 1999, during a wild design studio taught by local Boston architect Sheila Kennedy and sponsored by OSRAM Sylvania, titled Bugs, Fish, Floors & Ceilings : Luminous bodies and the contemporary problem of material presence , we explored that very notion, that light could become a material. I took the topic in a more theoretical direction, proposing a soft fabric screen that emitted digital content for use in a modern theater.

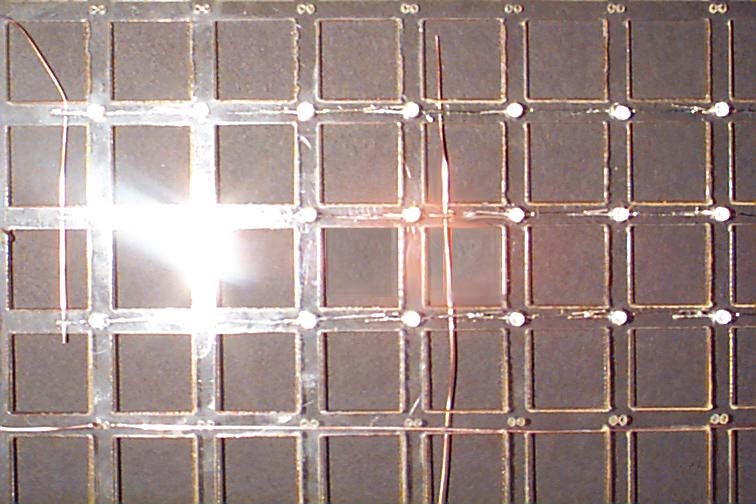

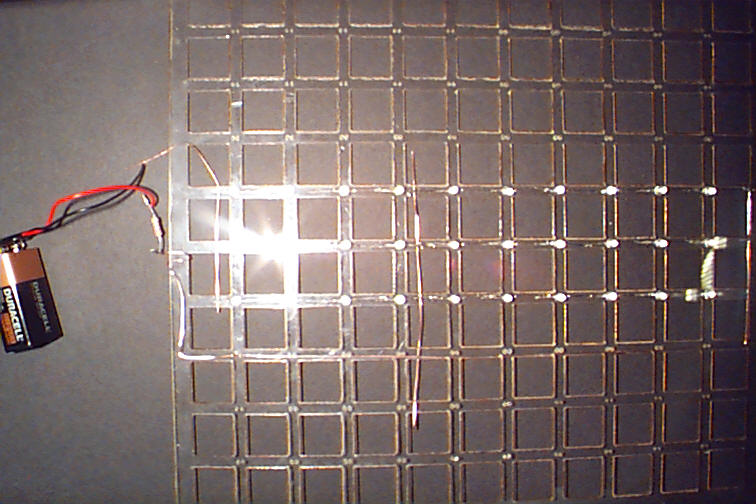

I made a conceptual model that attached 5mm LEDs onto a flexible, clear poly-carbonate sheet; I proposed that in the future, the wiring would be fundamentally integrated into the material substrate – PCB’s would disappear. In hindsight, I was right-on-the-money with that prediction – as evidenced by the emergence of flexible, printed electronics. Below are the only images I have of the model (sorry for the bad images – remember – I was shooting on film! Film!!!!!!).

Internet Commerce

At the time of my thesis, internet retailing was also just in its infancy but the potential to transform “brick-and-mortar” retail was obvious. But the challenge was how to fuse the ethereal digital world into the physical retail world?

Sensors

Experiments by multi-media artists, mostly using the MIDI communications protocol for digital instruments, showed the tremendous promise of using electronic sensors for interacting with digital media. An early example was the 1995 I-CubeX system from Infusion Systems (which amazingly, is still around after all these years), which created an amazing catalogue of various sensor technologies that could be implemented in the system.

Part III: The technologies come together…now what are the implications?

OK, so let’s summarize: In 1999, we had:

- LED multi-channel fixtures/luminaires

- The ability to network these LED fixtures

- The ability to connect them to the Internet

- The potential for light to become a building-material

- The potential to use sensors to physically interact with digital media…potentially in an application like a retail store.

So from this technological base, I started to explore how to use interactive control of lighting and digital media for retail environments.

Part IV: Exploring the concept of dynamically controlled space

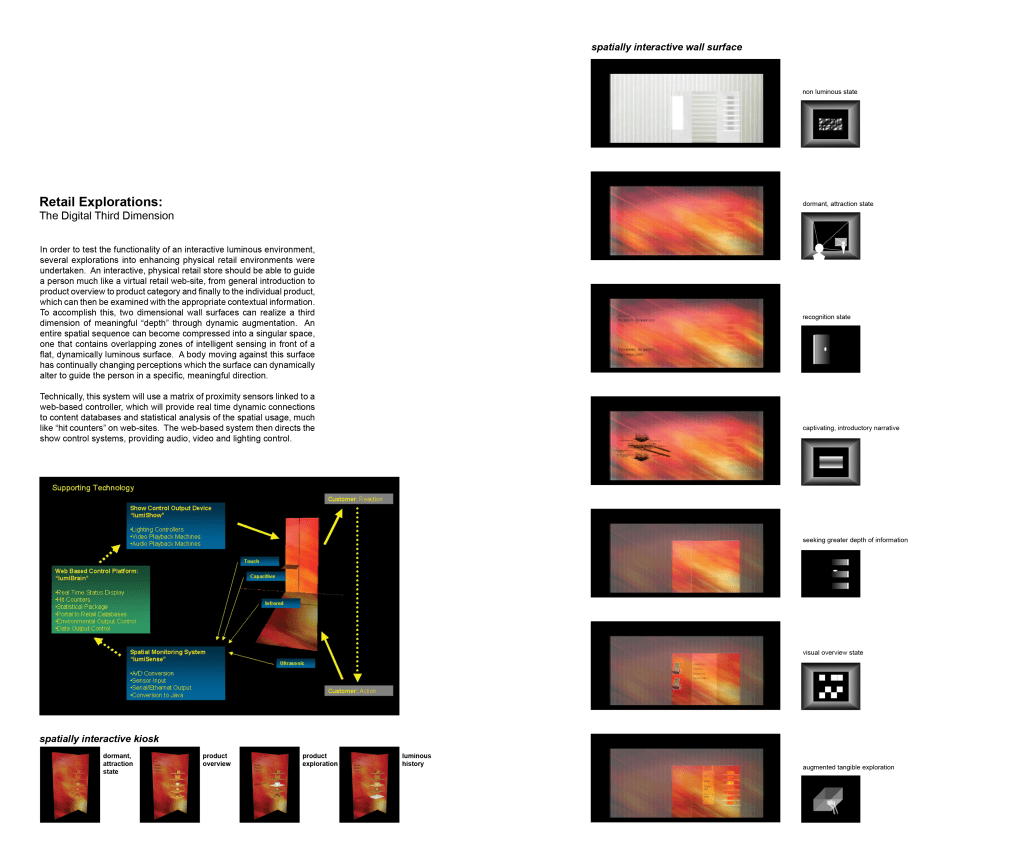

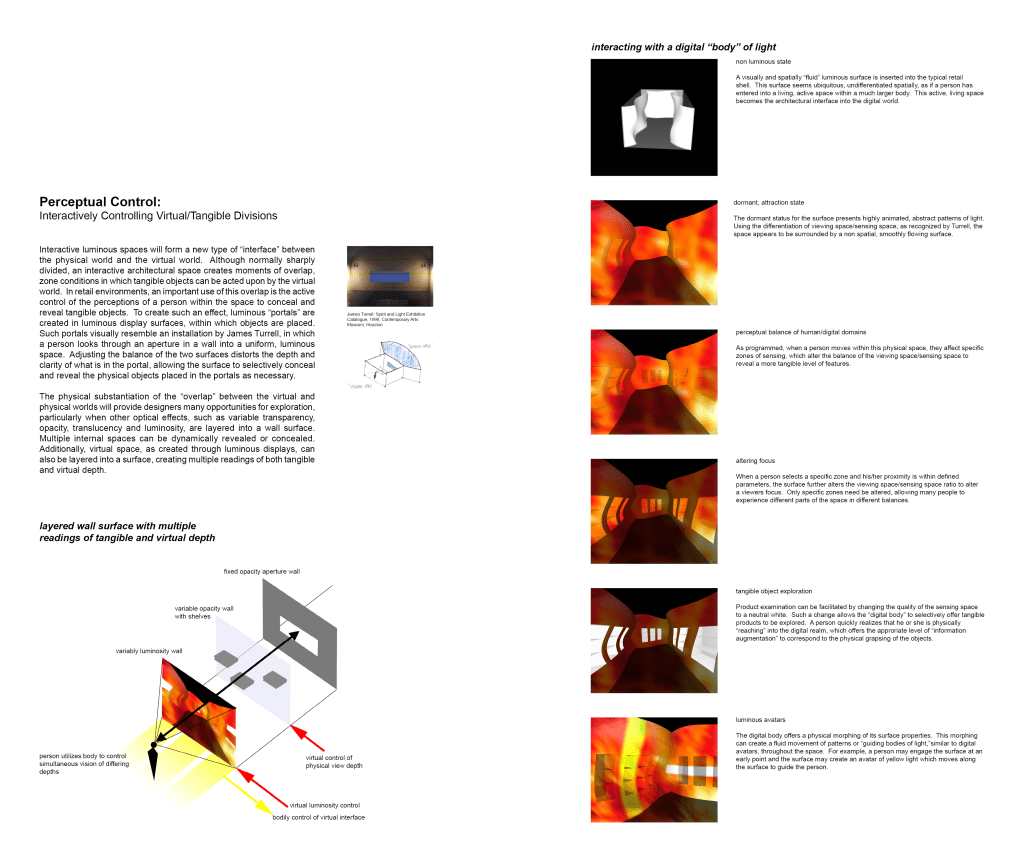

As you can see in the front section of the thesis book, I explored and diagrammed a language of “active” objects, surfaces and zones.

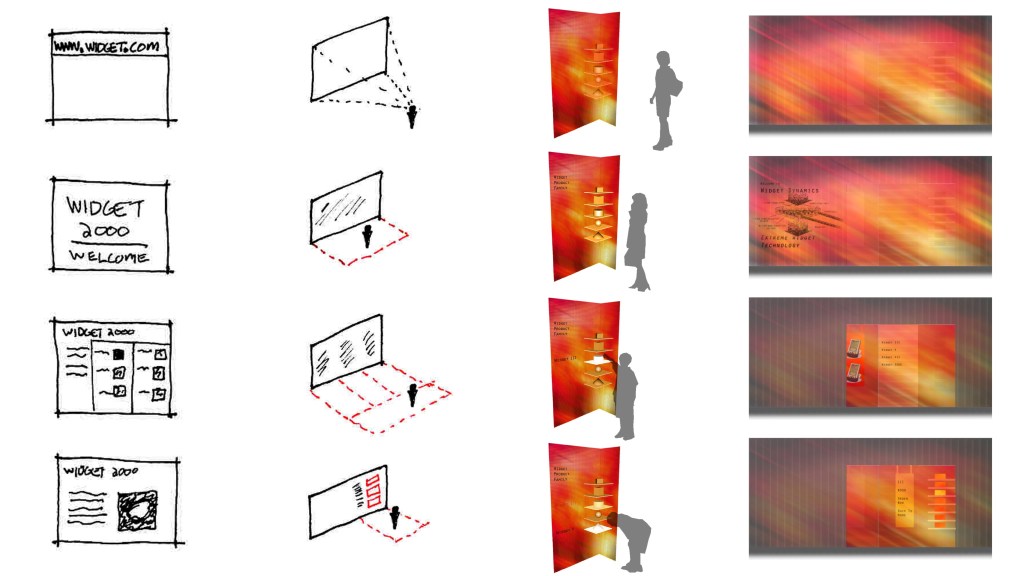

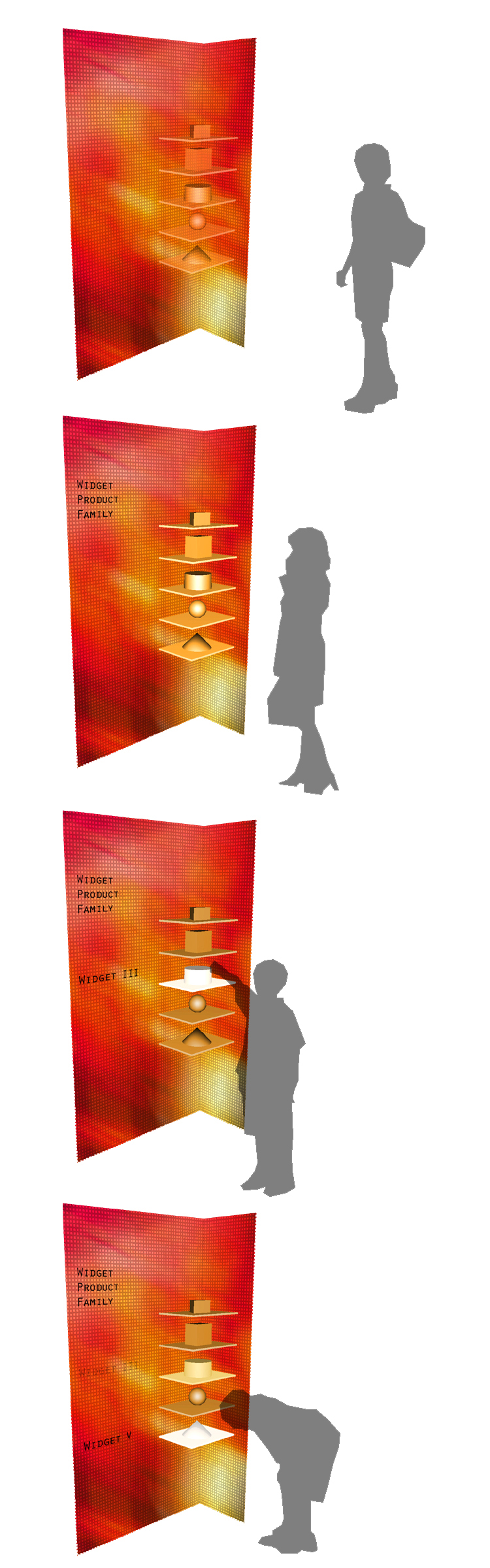

I realized that what we now call “web 1.0” e-commerce sites all had a distinct spatial progression: A splash screen, a category page, then a product page. This could be translated into spatial zoning and a progression in a physical retail store.

Proximity sensors could to detect where a person was in this progression; dynamic luminous surfaces and shelving could act as the visual guide. Below is an example of an interactive kiosk I proposed:

The technology platform was fairly easy to define at that point. Here’s the fundamental diagram for a system I nicknamed “LumiSense”:

But here’s the kicker – what I drew is effectively the modern Internet of Things (with the “Internet of Things” being a term coined almost at the same time, in 1999). My diagram effectively foreshadowed the next two decades of lighting controls development. A damn shame I went to the GSD (that had no competent IP program in place) versus someplace like MIT’s Media Lab – I might have written some incredibly fundamental patents!

Part V: Prototype #1

One of the mandates of the technical thesis track was to prototype. Luckily, I reached out to Kevin Dowling of Color Kinetics to pitch my thesis, and Kevin was happy to support by lending me some of CK’s early products, small color changing LED spot lights, flood lights, and cove lighting. I also was able to borrow a Horizon controller to experiment with HTML control. The problem for me was how to get these point/linear format light fixtures to emulate a plane of light and how to simulate sensor-control from a theatrical console.

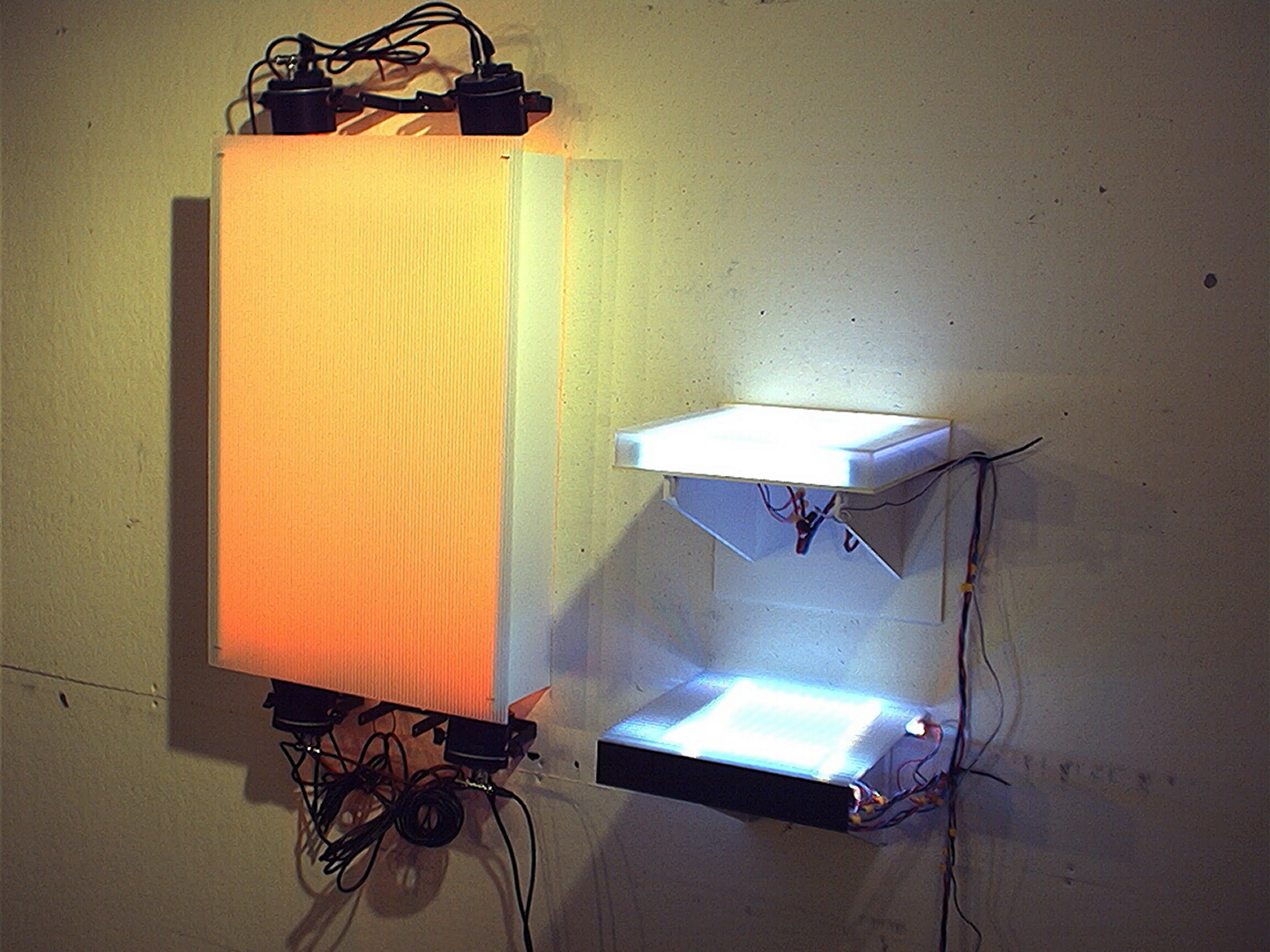

The first prototype setup used 4 CK color changing spots in a typical light box to emulate a low-resolution luminous surface, next to two distinct glowing shelves with CK cove lights.

The Horizon controller luckily included two contact closure inputs, which I hacked with an IR near-range sensor and an ultrasonic far-range sensor, to at least demo some “live” proximity interactions.

The architectural professors at the jury though didn’t like how fragmented that presentation was; they wanted me to somehow unify the design.

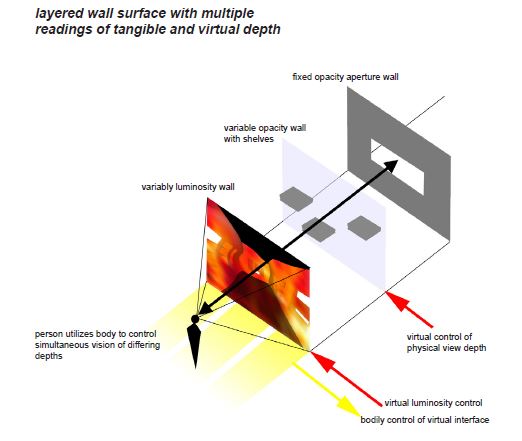

Part VI: Exploring the concept of layered dynamic presentations

Michelle Addington pressed me though to take the concept even further. She was researching a variety of material developments, including variable translucency LCD glass, that hinted at a future where more sophisticated layering of information could be conceivably implemented- selectively showing or revealing space, visual data, or even physical merchandise. In 1999 I got as far at these sketches:

With today’s technologies, this concept of “layering visual information” is a bit more feasible. Very large LCD monitors, LED screens with very fine pixel pitch, transparent LCD screens, variable opacity LCD glass, and digital projections/projection mapping technologies could be combined to create some interesting plays of depth and focus control. I think there is a lot to be explored here, still to this day…

But back in 1999, this “layered” concept led me to the second prototype:

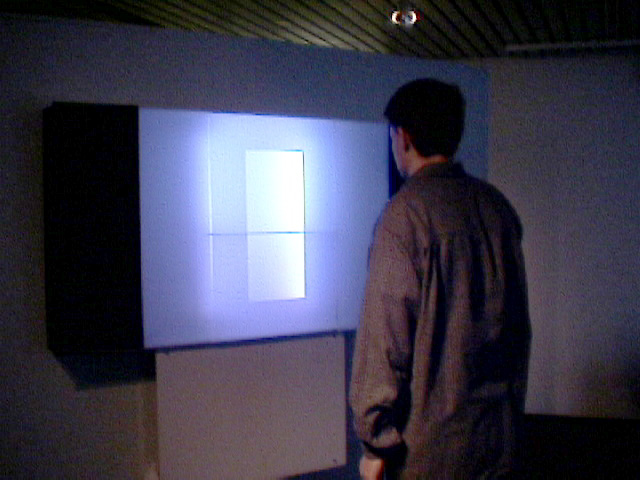

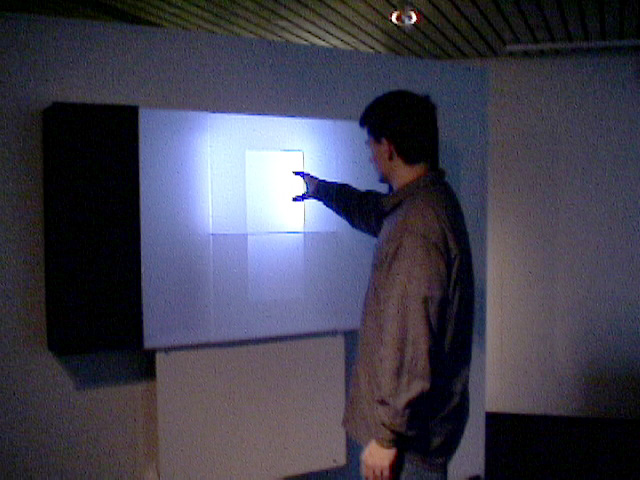

Part VII: Prototype #2

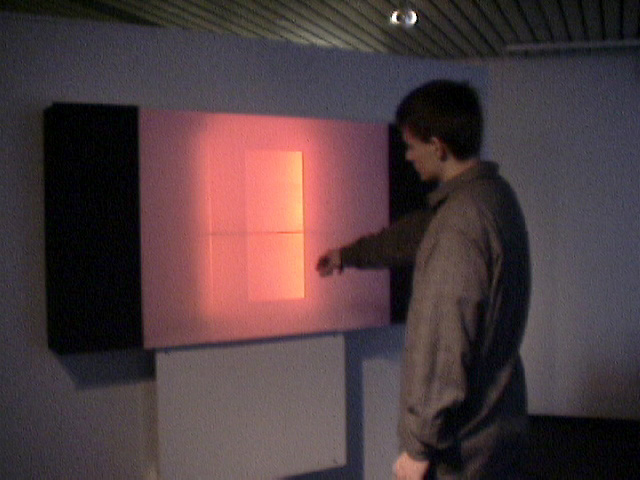

I devised a configuration using corrugated plastic sheeting to create layered front and back translucent surfaces, with shelves “hidden in plain sight” in the middle aperture. I conceived of the effect very much like a James Turrell “ganzfeld” piece. Between scripting the effects and the two triggers I could use, I was able to convincingly “fake” the final effect for the jury.

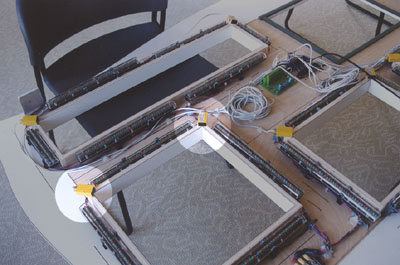

Inside the prototype wall.

I also programmed the wall to show emotive capability, such as a dramatic sunrise effect:

Below is a video of the two demos (shot on Hi8…I’m so old!) – the interactive demo starts at 4:25.

This demo seems so simple nowadays, but back in 1999 this was very unique. Most of the professors and critics had never seen an effect such as this outside of a theatrical performance, and certainly not from something that seemed like an architectural surface.

Part VIII: Cools concepts! Soooo…what happened???

Long story short, my timing was impeccably awful: The dot-com bubble burst right when I graduated. No one wanted to hear anything about e-commerce, much more some crazy ideas about integrating e-commerce into physical stores. Since I had no programming abilities myself, I couldn’t start creating the “lumiSense” interactive store control system as I conceived of it. And my job offer at CK evaporated as they had to suddenly cut jobs (which is why I didn’t work there until 2005). Then 9/11 happened, which really threw cold water on the idea of a startup.

I did win some prestigious recognition for the project: Awards in ID Magazine and Interiors, publication in Professional Lighting Design, and I was asked to present the topic to the European Lighting Design Association in Milan.

Kevin Dowling realized the long-term value of the concepts and was a big supporter. After I graduated I offered him a design concept for an interactive tradeshow exhibition for CK’s light fixtures, which he pushed ahead with me. The display had recessed pockets sized for each product, internally lit around the perimeter with CK cove lighting, with the products mounted so they appeared to float in the middle. Kevin used industrial IR sensors to detect when someone reached for a product; this triggered a light show that focused on the product being touched and an LCD screen in the middle displayed corresponding product data.

The display was premiered at Color Kinetics’ Lightfair booth in 2002. Kevin and I thought this was going to be a revolutionary piece – but in reality, hardly anyone “got it”. People were still simply baffled by LED lighting alone – most couldn’t even conceive of the potential of connecting the physical world to the digital world via Internet-connected sensing.

Later, while I worked at Color Kinetics, I pitched the concept for a live interactive controller (as opposed to a pre-scripted setup like most lighting controllers), but unfortunately it was never deemed a priority and never made it to the product roadmap. When Color Kinetics was bought by Philips in 2007, I soon had enough and quit.

Ironically, about the same time, an internal Philips venture in Europe was launched called Philips Retail Solutions – focusing on interactive lighting and digital content control for retail applications. After 3-4 years of trying, the venture never made much money and was shuttered. They simply struggled to convince retailers of the potential of interactive presentations.

Part IX: Conclusion

There is obvious potential for interactive lighting, whether for retail, or entertainment, or for creating a more pleasant, tailored environment in general. There remains clear opportunity to fuse the digital world with the physical world, to treat the physical built environment as a portal to the virtual world. And now with all the recent technology developments like smartphones, coded light, etc. there are many additional opportunities to create personalized interactions with “smart” architectural environments. I’m hoping that some application will “catch” and deliver value to at least one market segment, driving further exploration and development in the area overall.